Live from NVIDIA GTC: 6 Lanchi Ventures Portfolio Companies Take the Stage to Tackle 6 Technical Challenges in AI Deployment

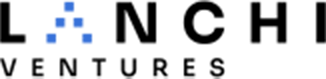

NVIDIA GTC is currently in full swing in San Jose. Among the “AI-Native Enterprises” highlighted by Jensen Huang, AGIBOT was featured in the “AI for Robots” category, while Moonshot AI (Kimi) stood out among the “Frontier Model Builders.”

Both companies are proud members of the Lanchi Ventures portfolio.

Image Source: NVIDIA

But our presence at GTC goes even deeper. This year, founders and technical leaders from six Lanchi Ventures-backed companies took the stage to share their insights: Zhilin Yang (Founder, Moonshot AI), Kaihua Zhu (Co-founder & CTO, Genspark), He Wang (Founder, Galbot), Mo Wu (Head of Simulation, AGIBOT), Peng Jia (CEO, Simplexity Robotics), and Kun Zhan (Foundation Model Lead, Li Auto).

These six companies are tackling the real, structural challenges as AI transitions from “lab-bound technology” to “real-world application.” While their technical paths vary, they converge on a single truth: In the race toward deployment, the scarcest resource is not a concept, but the ability to define the core problem and make the right architectural choices.

Here’s a front-line dispatch from the Lanchi family at GTC. As an early-stage investment firm, we continuously focus on those who define and deconstruct these core problems. We look forward to more founders joining us on this journey.

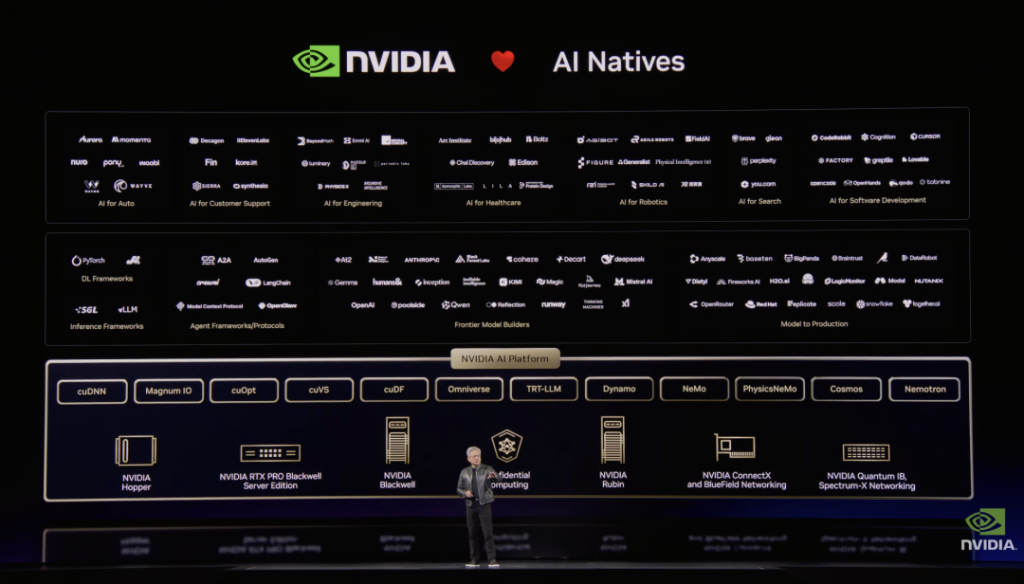

1. Breaking the Scaling Law Ceiling

Image Source: Jiaziguangnian

Company: Moonshot AI (Kimi)

The Challenge: Is the Scaling Law hitting a wall? While the industry debates whether “brute force” compute is still the answer, Kimi founder Zhilin Yang offers a provocative take: The real bottleneck isn’t compute—it’s our reliance on “legacy technologies” that we’ve come to accept as default.

The Solution: The Kimi team took a “first-principles” approach, deconstructing and rewriting foundational components—optimizers, attention mechanisms, and residual connections—that haven’t been touched in years.

- Overhauling the Optimizer: Replaced the industry-standard “Adam” with the more efficient Muon optimizer. To solve training instability, they developed and open-sourced MuonClip, achieving 2x computational efficiency over traditional AdamW.

- Challenging the “Gold Standard” of Attention: Broke the convention that all layers require full attention, proposing a hybrid linear architecture (Kimi Linear) that boosts decoding speeds by 5-6x in ultra-long contexts.

- Fixing Residual Connections: Introduced Attention Residuals to prevent information dilution in deep networks—a move that earned public praise from industry icons like Andrej Karpathy and Elon Musk.

🔑 Takeaway: When brute-force scaling yields diminishing returns, revisiting “default-correct” foundational architectures is the key to the next frontier of intelligence.

2.Solving for the “Long Horizon”: Can AI Handle Multi-Day Tasks?

Company: Genspark

The Challenge: Most users interact with AI in short bursts. But Kaihua Zhu, Co-founder & CTO of Genspark, asks a tougher question: Can your AI maintain high-quality execution over several continuous days?

The Solution: Transitioning from simple search to Long-Horizon Agents requires a radical shift in architecture and technical trade-offs:

- Advanced Task Orchestration: Utilizing model routing and tool execution for complex, multi-step workflows.

- Mitigating Error Accumulation: Identifying failure modes like “partial execution” and “compounding errors” that often plague long-duration runs.

- Trajectory Correction: Implementing “checkpoints” and self-correction mechanisms to ensure agents stay on course during large-scale operations.

🔑 Takeaway: As AI enters the realm of long-cycle tasks, reliability becomes a more valuable currency than “raw intelligence.”

| Preview: Kaihua Zhu’s talk is scheduled for Friday, March 20th at 3:00 AM (Beijing Time). Stay tuned!

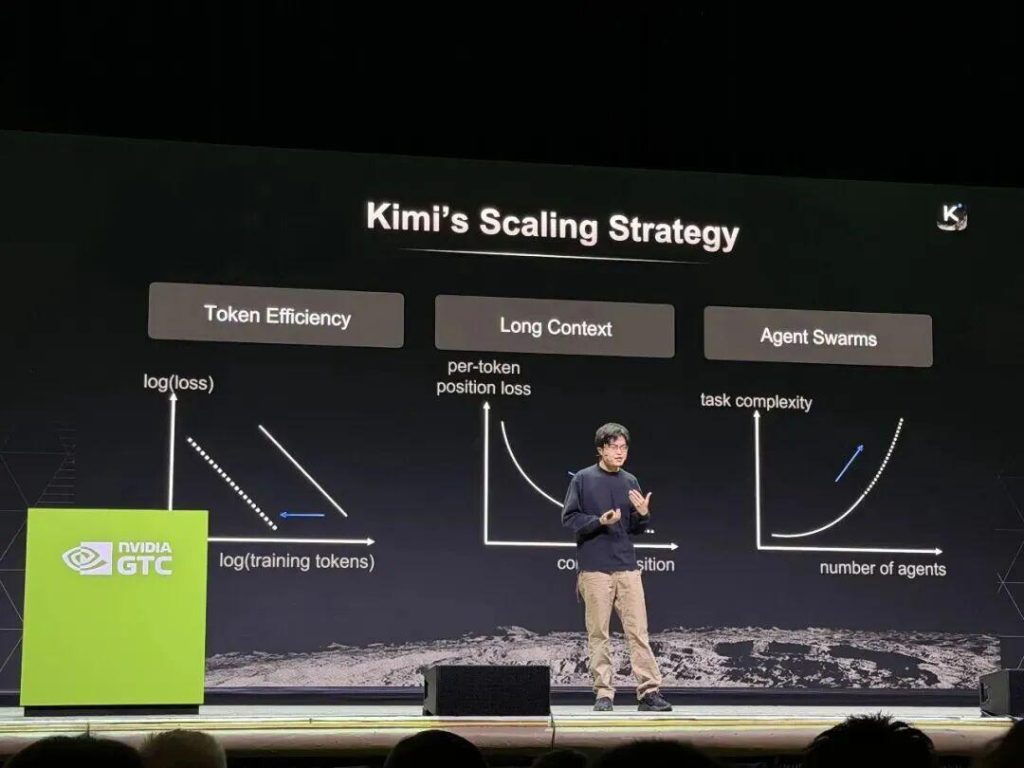

3.The “Data Pyramid”: Solving the High Cost of Real-World Training

Company: Galbot

The Challenge: “The biggest hurdle in Embodied AI today is the prohibitive cost of real-world data,” notes Galbot founder He Wang. Teleoperation and motion capture are too slow to scale. To reach the trillions of data points required for General Intelligence, we cannot rely on physical collection alone.

The Solution: He Wang proposed a “Data Pyramid” architecture. The base consists of internet-scale video/text; the middle layer leverages human behavior data; the core is built on multi-entity synthetic simulation data, with real-world data forming the final feedback loop. This powered Galbot Brain, the world’s first end-to-end foundation model integrating “high-level planning and low-level control” (Big Brain & Small Brain).

- Simulation-Honed Precision: From juggling walnuts to precision food prep, these capabilities are perfected in simulation first, not teleoperated.

- Zero-Shot Generalization: By generating massive randomized scenes in sim, Galbot can handle “uncooperative” objects—like transparent bottles or soft fabrics—in the real world without prior training.

- Proven Scale: Already deployed in smart pharmacies across 24 cities and retail kiosks in 100+ locations, proving that simulation-driven data is a commercial reality.

🔑 Takeaway: Instead of getting lost in grand narratives, anchor your tech in a real pain point and use simulation to solve the data scarcity problem.

4.Closing the “Sim-to-Real” Gap

Company: AGIBOT

The Challenge: The “last mile” for industrial robots is the discrepancy between simulation and reality. A robot may perform flawlessly in a digital environment but stumble on the factory floor.

The Solution: AGIBOT’s philosophy is: “Rehearse in the digital twin to ensure first-time success in the physical world.” Mo Wu, Head of Simulation at AGIBOT, unveiled Genie Sim 3.0, a comprehensive simulation deployment toolbox:

- Voice-to-Scene Generation: Using natural language to generate editable, massive-scale scenes in minutes.

- High-Fidelity Reconstruction: Fusing 3D Gaussian Splatting with physics engines to achieve <10% discrepancy between sim and real-world testing.

- AI Evaluator: Utilizing VLM/LLM-based automatic profiling to replace expensive and time-consuming physical testing.

🔑 Takeaway: Simulation isn’t just a substitute for reality; it’s the insurance policy that ensures your real-world attempt is a success.

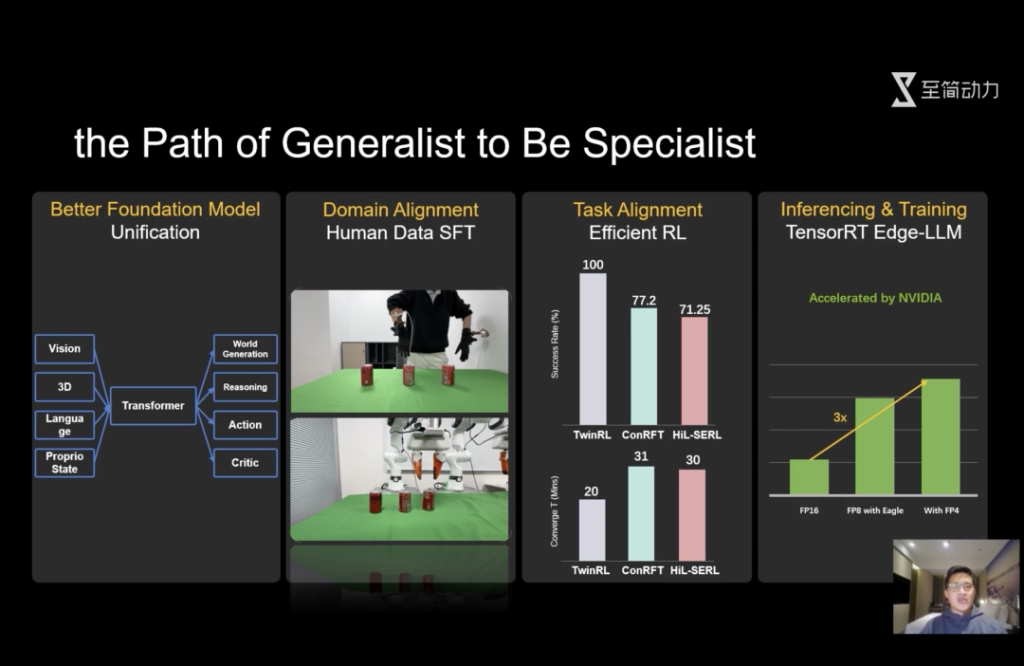

5.The Power of Subtraction: Achieving 100% Success in the Factory

Company: Simplexity Robotics

The Challenge: Amidst the “architectural wars” of VLA vs. World Models, Peng Jia (CEO of Simplexity Robotics and former Li Auto/NVIDIA veteran) focuses on the only metric that matters in manufacturing: 100% success rate.

The Solution: Instead of adding complexity, Peng Jia advocates for “Subtraction”—merging all capabilities into a unified, lean architecture.

- The “LaST₀” Foundation Model: A “Grand Unification” model that integrates multimodal understanding, “fast and slow” thinking, and policy evaluation using a cost-effective MoT (Mixture of Tokens) architecture.

- Extreme Efficiency: A 14x increase in inference speed. Robots can achieve 100% success rates on new downstream tasks with just 20 minutes of generalization.

- Token Distillation: While others worry about “token explosion,” Simplexity found that each modality can be distilled down to as little as a single token without losing core functionality.

🔑 Takeaway: In a complex physical world, the most robust solution is often the simplest. Return to fundamentals with a unified architecture.

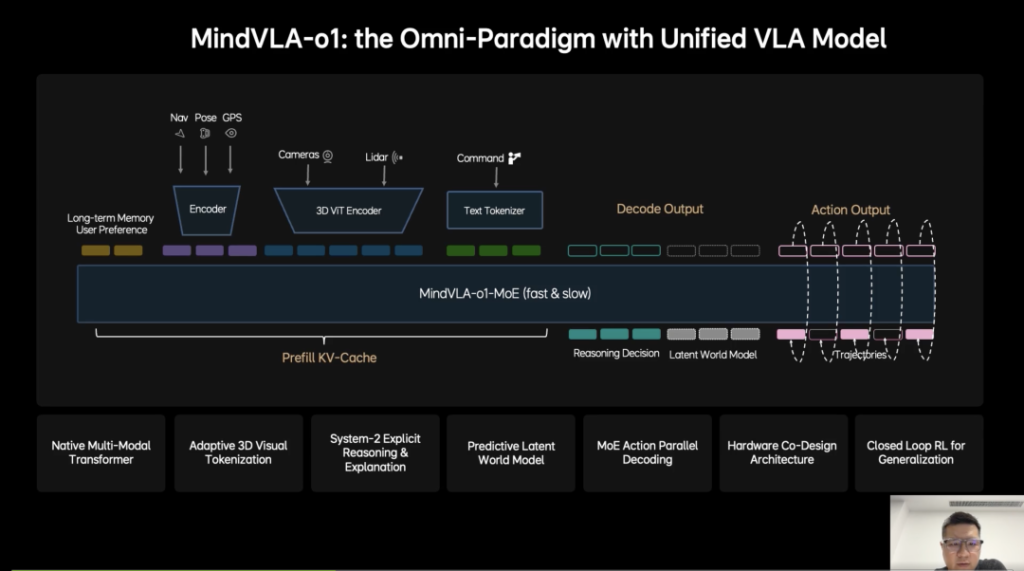

6. Vehicles as “Silicon-Based Life”: The Future of Autonomous Mobility

Company: Li Auto

The Challenge: Li Auto isn’t just building a smarter car; they are building the “brain” for the next generation of physical agents. Kun Zhan, Foundation Model Lead, views the vehicle as the largest-scale robot currently in deployment.

The Solution: The MindVLA-o1 model introduces five key innovations to push the boundaries of autonomous systems:

- 3D Spatial Reasoning: Fusing vision and LiDAR for a true 3D understanding of the environment.

Predictive World Models: Moving beyond perception to “imagination,” allowing the AI to infer scene changes seconds into the future.

- VLA-MoE Architecture: Parallel decoding for driving trajectories that feel human and dynamically feasible.

- Accelerated Evolution: Using a world simulator to reduce training costs by 75% while accelerating iteration.

- Hardware-Software Co-Design: Slashing architecture exploration time from months to days by balancing accuracy with inference latency.

🔑 Takeaway: When leading automakers apply the mindset of creating “digital life” to autonomous driving, the technological horizon expands far beyond the road.